Imagine you’re climbing Mount Everest in a thick fog with amnesia. You can see only as far as your outstretched hand. You don’t know where you are or what direction to head in, only that you need to reach the peak. What are the odds you do?

Probably as low as the mountain is high.

As dramatic an analogy as this is, it’s been made in reference to the foundation of a popular A/B testing method. That peak is the best version of your post-click landing page, and you’re confused, nearly blind, and lost on the mountainside of your current page.

What’s worse, you’ve probably been handed an incorrect map by your favorite marketing bloggers. You might think you’re A/B testing the right way — that you’re headed in the direction of that peak. Chances are, though, you’ll soon hit a dead end, and you’ll never find the highest-performing variation of your post-click landing page.

The A/B testing myth that confuses beginners

Some of the most popular marketing blogs on the internet continue to perpetuate an A/B testing myth that dooms beginners from the start. It victimized me when I started, too.

“Don’t change more than one page element per A/B test” I kept reading over and over. I didn’t question it because the sources were trustworthy and because mostly, well, it just made sense.

The point of A/B testing is to gather data you can use to optimize a page, email, advertisement, or whatever you happen to be testing. How was I supposed to know what caused the change in conversion rate at the test’s conclusion if I made more than one adjustment between versions “A” and “B”? What kind of data would that be?

So, I tested elements one at a time: headline of post-click landing page “A” vs. headline of post-click landing page “B.” Next, it was the blue button on post-click landing page “A” vs. the green button on post-click landing page “B.” This was how the pros and Amazon and Google did it. If it was good enough for multi-billion dollar companies, it was good enough for me.

The problem I overlooked, which became painfully aware to me months later, is that I wasn’t Amazon or Google. Their websites generate lots of traffic, which means that the small lifts gained by these frivolous button color tests can translate to millions of dollars in revenue.

For most people, though, they’re a waste of time and resources. For most people, using this A/B testing method is like climbing Mount Everest in a thick fog with amnesia.

The hill-climbing heuristic

In our everyday lives, there are problems we encounter for which the solution is clear. But, what happens when you’re faced with an unfamiliar and largely unique obstacle? Take this maze, for instance:

There are no blog posts written about the best way to navigate this particular maze. Your friend (most likely) can’t guide you through it. You have no map. So, how do you get from A to B?

The answer can be found in a computer program called “The General Problem Solver,” built in 1963 by Newell, Simon, and Shaw to study artificial intelligence. Their research has also been applied to human problem-solving. Dr. Russ Dewey explains:

Newell and Simon defined each problem as a space. At one end of the space is the starting point, on the other side is the goal. The problem-solving procedure itself is conceived as a set of operations to cross that space, to get from the starting point to the goal state, one step at a time.

In the General Problem Solver, the program tests various actions (which Newell and Simon called operators) to see which will take it closer to the goal state. An operator is any activity that changes the state of the system. The General Problem Solver always chooses the operation that appears to bring it closer to its goal. This tactic is called hill climbing, because it resembles the tactic of always taking a step toward the top of a hill or mountain.

In the maze above, each dead end is a “space” — a problem you need to overcome with “operations,” which are actions that bring you closer to your goal (getting from “A” to “B”).

So, you start at “A” and follow the path you think will take you to “B” the fastest. When you hit a dead end, you go back and try an alternate route. You repeat the process until you’ve reached your goal.

This is what you’re doing when you’re A/B testing small elements. You identify a problem, like an unnoticeable button, for example. Then, you inch closer to your goal of creating the best version of your post-click landing page (or so you think) by testing what you hypothesize is a better one. If that doesn’t work, you test another.

At some point, though, you’ll reach a point of diminishing returns called the “local maximum.”

The local maximum and the global maximum

The reason the above method is called the “hill-climbing” heuristic is because it has a major limitation that can leave you scratching your head on a hill looking up at the peak of the mountain — where your best post-click landing page is. Dr. Dewey explains:

Hill climbing is a simple strategy, but it does not always work. One potential trap is the "foothill problem." If you are selecting whatever step takes you uphill (or in a particular direction) you might end up climbing a foothill that lies between yourself and the mountain, ignoring the much more efficient procedure of going around it. In other words, if you go straight toward a goal without flexibility, you may pay a steep price, waste a lot of energy, or cause more work for yourself without contributing to the goal.

In optimization terms, this “foothill” is called the “local maximum.” It’s the best version of your current page, that when A/B tested further, will yield diminishing returns. The mountain’s peak is called the “global maximum.” That’s the best version of your post-click landing page. Here’s a helpful graphic to illustrate:

By starting with a singular post-click landing page and A/B testing small elements one at a time to improve it, you’re getting closer to that local maximum, but this method will never get you to the peak of that mountain. So how do you do navigate your way there?

A/B testing to reach the global maximum

In a blog post titled “Don’t Fall Into the Trap of A/B Testing Minutiae,” Moz’s Rand Fishkin explains a frustrating scenario that might hit too close to home:

Let's say you find a page/concept you're relatively happy with and start testing the little things - optimizing around the local minimum. You might run tests for 4-6 months, eek out a 5% improvement in your overall conversion rate and feel pretty good. Until...

You run another big, new idea in a test and improve further. Now you know you've been wasting your time optimizing and perfecting a page whose overall concept isn't as good as the new, rough, unoptimized page you've just tested for the first time.

Instead Fishkin, like other marketing influencers, recommends A/B testing “overhauls” and “big ideas” first — or, in other words, testing radically different pages. Of those radically different pages, the one that is the best performer is closest to the global maximum. That’s the one you should fine-tune with multivariate testing: button vs. button, headline vs. headline, etc. Several case studies support this method.

A/B testing for the global maximum

1. Facebook

In 2008, Facebook tested an entirely new navigation design. Their team moved it from the left of the page to a drop-down on the right. However, in doing so, they made the apps within the navigation less visible to users, which resulted in much less traffic to those apps. Since they were a valuable source of revenue for Facebook, that was a problem.

After trying to salvage the new design with a few engagement tricks, the optimization team realized they had hit the local maximum and scrapped the entire thing. From Facebook Product Developer, Adam Mosseri:

What we were doing here is we were optimizing for a local maximum. Within this framework, there was only so much traffic we could funnel to applications. And what we needed was a structural change. Our premise was off. Our interests were leading us down the wrong path. We didn’t realize it […] we were optimizing for something locally, and we needed to be somewhat disruptive to sort of get out of it.

2. Moz

This variation page, created by Conversion Rate Experts for Moz, was six times longer than the original. It featured more infographics, a different headline, and a video, among other elements that weren’t on the original page. It outperformed the control by 52%.

If the team had only worked on improve the elements on the original page, they never would’ve added the content that boosted conversions by half. From the testers:

In our analysis of Rand’s effective face-to-face presentation, we noticed that he needed at least five minutes to make the case for Moz’s paid product. The existing page was more like a one-minute summary. Once we added the key elements of Rand’s presentation, the page became much longer.

3. Server Density

Most A/B testing case studies you’ll find online have to do with the appearance of web pages. They’re button color vs. button color or image vs. image, and the number of them is growing each day. As a result, most people who dive into A/B testing assume that it can only be used for web design.

However, the concept of A/B testing can be applied to anything, including product design or even pricing structure, as you’ll see with this example from Sever Density.

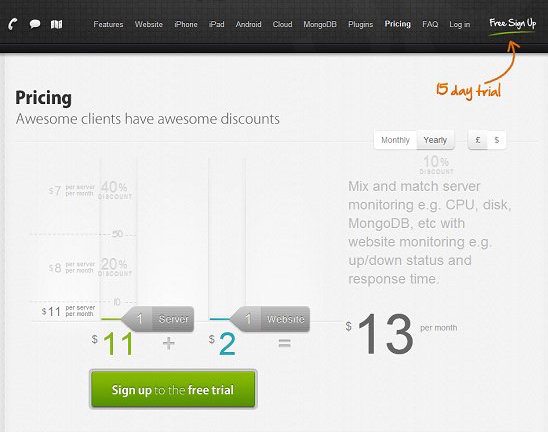

The company, a server and website monitoring service, allowed its customer to pay based on how many servers and websites they needed monitored:

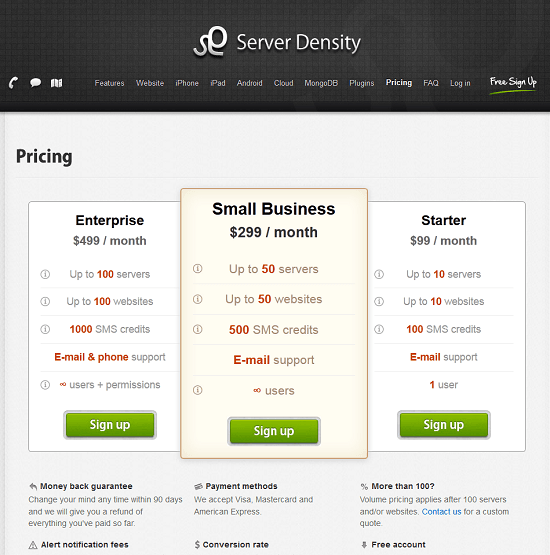

The structure was intended to broaden the customer’s base, which it did, but now the team’s sights were on higher revenue. So, they A/B tested a complete overhaul of their pricing, with a packaged model:

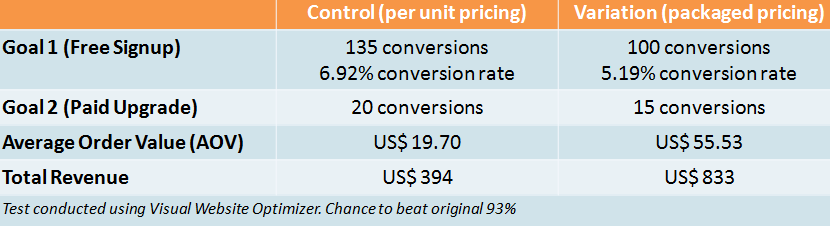

The new structure actually produced fewer conversions, but the value of each skyrocketed. Here were the results:

The average order value was now over $55 compared to the old one: $19.70. The takeaways remind testers of two crucial things:

A/B testing can be used for more than superficial design changes.

Conversions aren’t the be all end all. Sometimes fewer conversions get you better results.

How do you find the global and local maximum?

Remember: The strength of A/B testing is getting as close to the global maximum as possible with big changes. To find the local maximum, you’re better off using multivariate testing instead.

Have you fallen into the trap of testing strictly one page element at a time? Do you make multiple changes per test to find the global maximum?

Learn how to provide 1:1 ad personalization for every audience you have with an Instapage Enterprise Demo today.

See the Instapage Enterprise Plan in Action.

Demo includes AdMap™, Personalization, AMP,

Global Blocks, heatmaps & more.