Actor Maurice Chevalier once wisely said, “If you wait for the perfect moment when all is safe and assured, it may never arrive. Mountains will not be climbed, races won, or lasting happiness achieved.”

“…nor many A/B tests concluded,” we’d like to add.

When split-testing, there’s never a “perfect” time to declare a winning variation. Sure, you could argue that moment occurs when a test has run long enough to reach statistical significance — but even then, you can’t be confident that your results are entirely accurate.

So when even the big-timers, who have access to the massive amounts of traffic needed to reach statistical significance, can’t be 100% sure of their results, how are you supposed to gain relevant insight in a timely manner from your A/B tests?

The difficulty of reaching statistical significance

In A/B testing, statistical significance is what tells you whether your variation is actually better or worse than the original. It’s measured in percentage.

At a 50% level of statistical significance, you can be just 50% sure that your test results are accurate. FYI, 50% isn’t an acceptable level of significance in any profession. Neither is 75%. Nor 85%. So what is?

“Generally, tests are run at 90% statistical significance,” says Optimizely, but, Peep Laja at CXL disagrees:

“Not good enough. You’re performing a science experiment here. Yes, you want it to be true. You want that 90% to win, but more important than having a “declared winner” is getting to the truth.”

He goes on to add, “You should not call tests before you’ve reached 95% or higher. 95% means that there’s only a 5% chance that the results are a complete fluke.”

So, other than a 95% level of significance, what do you need to make sure you set up your test for accurate results?

- A baseline conversion rate. What’s the current conversion rate of your control page?

- A minimum detectable effect. The relative change in conversion rate that you want to be able to measure in your test.

- Traffic. Based on your level of statistical significance, minimum detectable effect, and baseline conversion rate, you can determine how many visitors each of your variations needs to produce results that you can make accurate inferences from.

For example, let’s say your control page’s baseline conversion rate is 5%. You and your team decide that you want to confidently be able to say that a 10+% adjustment in conversion rate is due to your change, and not chance. And you want there only to be a 5% chance that your test is a fluke, so you’re testing at a 95% level of significance.

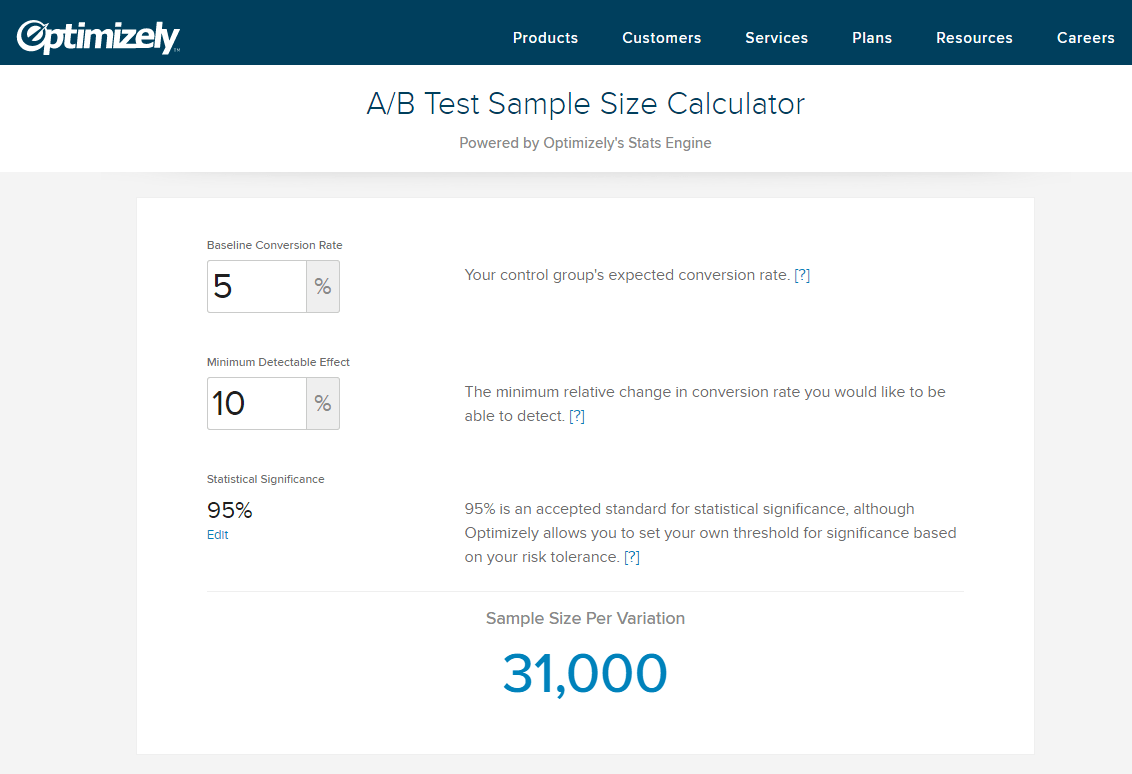

If we plug these numbers into Optimizely’s sample size calculator, here’s what we get:

So, if you’re testing two pages against each other, each needs to have a sample size of 31,000 visitors. For most businesses, that’s a pretty big number.

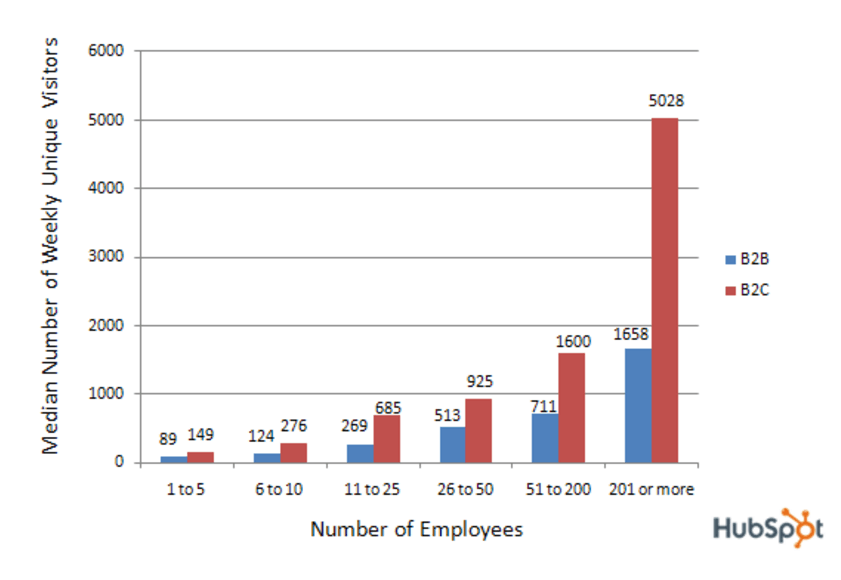

A survey of HubSpot customers a few years ago found that the median number of weekly unique visitors for small businesses are as follows:

Even if you’re a part of the largest group on this graph, and you include ALL your daily visitors in your test, it’ll take you 85 days to reach statistical significance. If you’re the smallest, it’ll take you nearly 14 years. Not all that practical, is it?

So if you don’t generate a steady flow of visitors, does that mean you can’t A/B test at all?

Nope. You still can — you just need to do it a little differently. For your tests, you’ll have to focus less on quantity of feedback (the 31,000-visitor sample size it’ll take to reach statistical significance), and more on quality of feedback (figuring out more from each visitor).

The difference between qualitative and quantitative feedback

Quantitative feedback is all about numbers. In an A/B test, when you have enough quantitative feedback, you can start to make inferences about visitor behavior.

For instance, let’s say you’re running an A/B test on your post-click landing page using the numbers above. In it, your control features a red CTA button, and your variation features a blue one, and so far, more visitors are converting on your variation than the control. Until each page has generated 31,000 visitors, you can’t be confident that the reason they’re converting more on your variation is because of the change in button color.

But when you’re collecting qualitative feedback, you engage with each individual prospect more to determine why it is they made the decisions they did. In the old days, qualitative feedback was only collectible via focus groups behind one-way mirrors. Today, marketers have a number of tools techniques at their disposal to make the most of each visitor.

1. Use survey technology

Survey tools allow you to ask your customers questions about their user experience to determine what might be the cause of their behavior:

“Let’s say you are selling a dog training course/eBook. You have a great sales page, plenty of testimonials, excellent design, and a bonus eBook to go along with the course. However, the conversions are dismal; on some days, you don’t even have any conversions.

What can you do?

You sign up for Qualaroo and ask your visitors for feedback. Create a spot in the sidebar or on a slide-in that asks people, ‘What are the problems you are facing with this site?’”

If the answer you get is something like, “I don’t know where to submit my information,” or, “This button isn’t working,” you won’t need to hear it 31,000 to learn that you need to make a change on your post-click landing page.

Just a few answers may reveal the reason behind your low conversion days. Use that visitor feedback to create a hypothesis about what you should change, and then run an A/B test to see if those changes make a difference.

2. Try live chat

Similar to the way survey tools can teach you more about your prospects, so can a live support person with chat. Live chat tools allow you to automatically deploy a small pop-up chat window in the visitor’s browser, introducing you as a helpful resource when the customer encounters an issue of friction.

If that happens, the idea is that they’ll ask you for assistance. If your customers are running into the same problems, asking questions like “Does your product come with a warranty?”, it’s probably time to test a version of the page that solves it. If you know exactly what your customers want from you, you can take the guesswork out of testing, and be confident that your new version will bring a conversion lift.

3. Focus on high-impact changes

When you don’t have enough traffic, and you don’t know which changes will have the biggest impact on your conversion rate, the best thing to do is focus on the ones that do have a potential to bring a big lift. You have to make that traffic count.

What are the odds a slightly smaller logo changes your conversion rate? Incredibly low. How many more people will hit your CTA button if it’s red instead of orange? Likely very few.

Make the best of your traffic by only focusing on things that are likely to bring a lift.

4. Call your customers

Yes, it’s true, people prefer to be contacted via email over telephone — but that doesn’t mean that you won’t be able to get them on the phone. Try taking the route that Alex Turnbull from Groove did.

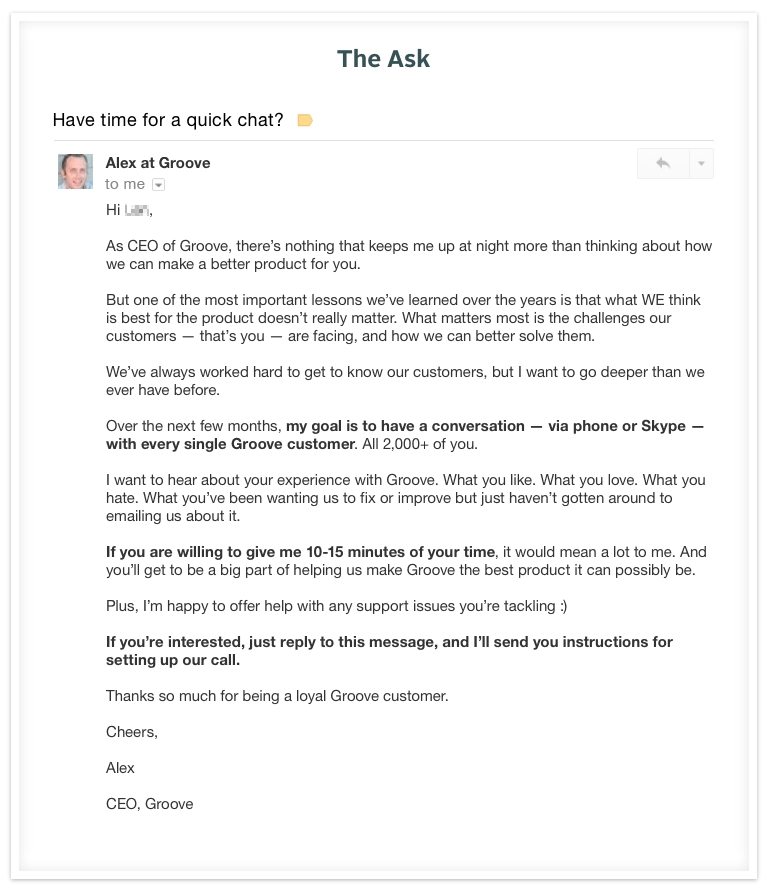

In September of 2014, he sent this email out to 2,000+ customers:

“The response,” he says, “blew me away.” In fact, it was so overwhelming that Alex realized a simple back-and-forth via email and a phone call with each customer weren’t plausible. So, he signed up for some services to help him handle the vast amount of conversations he would need to have.

Armed with a pen and paper, a phone, and memberships to Join.me, Skype, and Doodle, he set out to talk to everyone who responded. In four weeks, he spent more than 100 hours speaking to customers about what they wanted to see from Groove. The results were:

- He learned that many customers didn’t realize the full functionality of Groove, repeatedly describing problems that could’ve been solved by features already incorporated into the product.

- He turned many unhappy customers into happy customers.

- He better understood Groove’s buyer personas, which allowed the company to target market segments that they hadn’t before considered.

- He built better relationships with hundreds of customers.

- He got the chance to “wow” some customers relatively quickly. Many had customer support gripes that were fixed within a matter of minutes, resulting in better brand perception.

- He learned how to improve Groove’s marketing copy. “Hearing our customer talk about the app and its benefits, along with their personal stories, challenges and goals, is the only way we can write marketing copy that actually connects,” he said. “Talking to our customers is the only way to talk like our customers talk.”

Better understanding your customers means better understanding their wants, needs, and how to communicate those online. With insight like that, you won’t need to talk to 31,000 customers. In this case, 500 short conversations were easily more valuable than tens of thousands of clicks.

5. Invest in heat mapping software

When you need to make the most of each visit, heat mapping software allows you to gain deeper insight into the behavior of your prospects. Hover maps, click maps, and scroll maps will show you what your visitors are paying attention to, where they’re clicking, and how far down your page they’re scrolling.

The only problem is, with software like this, you’ll need a few thousands visits per page before you can make accurate assumptions about the data. To get around this, Peep Laja recommends you use tools that operate algorithmically, like EyeQuant, Feng-GUI, and LookTracker.

With them, you can upload a screenshot, and the software will give you tips to improve the page. “It’s not your actual users, but it’s something,” Peep says, “and some people I trust swear on their accuracy.”

Use Instapage to build and A/B test your post-click landing pages, request an Instapage Enterprise demo today.

See the Instapage Enterprise Plan in Action.

Demo includes AdMap™, Personalization, AMP,

Global Blocks, heatmaps & more.